Abstract

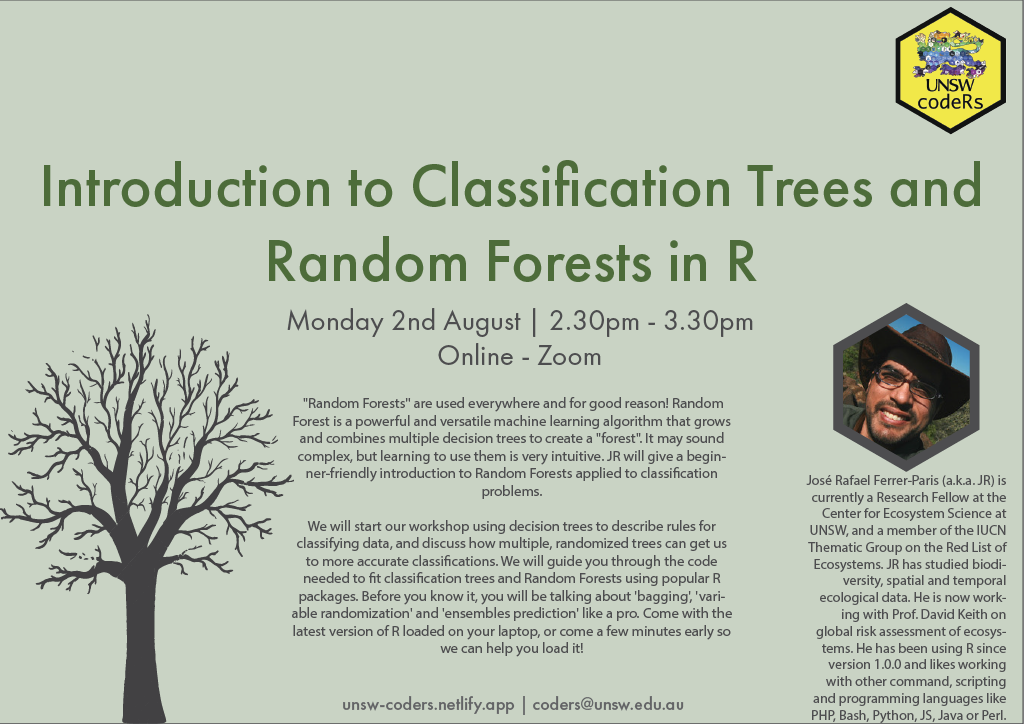

“Random Forests” are used everywhere, and for good reason! Random Forest is a powerful and versatile machine learning algorithm that grows and combines multiple decision trees to create a “forest”. It sounds very complex, but learning to use them is very intuitive, especially if you have a USNW codeRs workshop to help you.

Resources created by JR

This GitHub repository! contains all the material discussed in the workshop and will help you follow the recording. This includes a short presentation on using decision trees to describe rules for classifying data, and how multiple, randomized trees can get us to more accurate classifications. Then two R-markdown document are available to guide you through the code needed to fit classification trees and Random Forests using popular R packages.

Additional Resources

- Davis David: Random Forest Classifier Tutorial - How to Use Tree-Based Algorithms for Machine Learning

- Evan Muzzall and Chris Kennedy: Introduction to Machine Learning in R

- Github link: Machine Learning in R

- Github link: Machine Learning with Tidymodels

- Dave Tang: Building a classification tree in R

- Zach @ Statology: How to Fit Classification and Regression Trees in R

- Ben Gorman: Decision Trees in R using rpart

- Victor Zhou: Random Forests for Complete Beginners

- Bradley Boehmke & Brandon Greenwell: Hands-On Machine Learning with R

- Julia Kho: Why Random Forest is My Favorite Machine Learning Model

- JanBask: Training A Practical guide to implementing Random Forest in R with example